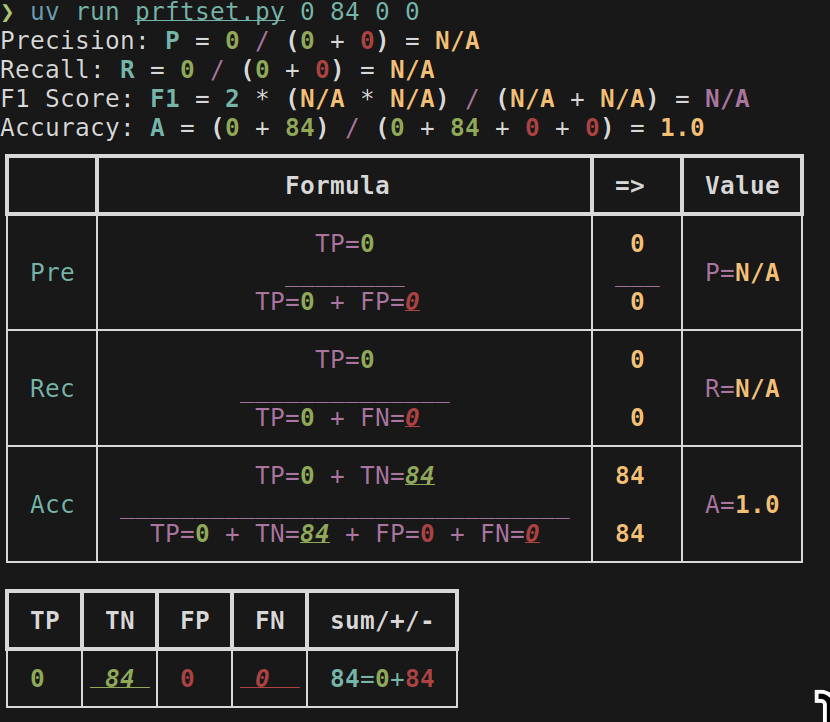

Tiny PRF demo program

I wrote this debugging some metrics, bad code but I don’t want it to die. Shows formulas for Precision/Recall/F-Score based on number of TP TN FP FN.

Depends only on rich, runnable w/ uv through 250128-2149 Using uv as shebang line.

Usage: uv run prftset.py tp tn fp fn

# /// script

# dependencies = [

# "rich",

# "pdbpp", # ви цього варті

# ]

# ///

from rich import print

from rich.layout import Layout

from rich.table import Table

import argparse

def get_scores(tp, tn, fp, fn) -> dict[str, int | float | str | None]:

"""Calculate precision, recall, and F1 score from true positives, true negatives, false positives, and false negatives."""

precision = tp / (tp + fp) if (tp + fp) > 0 else None

recall = tp / (tp + fn) if (tp + fn) > 0 else None

f1_score = (

2 * (precision * recall) / (precision + recall)

if precision is not None and recall is not None and (precision + recall) > 0

else None

)

accuracy = (tp + tn) / (tp + tn + fp + fn) if (tp + tn + fp + fn) > 0 else None

f_precision = float(f"{precision:.3f}") if precision is not None else "N/A"

f_recall = float(f"{recall:.3f}") if recall is not None else "N/A"

f_f1_score = float(f"{f1_score:.3f}") if f1_score is not None else "N/A"

total_ds = tp + tn + fp + fn

res = {

"TP": tp,

"TN": tn,

"FP": fp,

"FN": fn,

"total": total_ds,

"P ": f_precision,

"R ": f_recall,

"F ": f_f1_score,

"A ": float(f"{accuracy:.3f}") if accuracy is not None else "N/A",

# "A ": accuracy,

}

return res

def use_table(tp, tn, fp, fn, precision, recall, accuracy):

total = tp + tn + fp + fn

total_positive = tp + fn

total_negative = tn + fp

table_tn = Table()

table_tn.add_column("TP", justify="center", style="green")

table_tn.add_column("TN", justify="center", style="green underline italic")

table_tn.add_column("FP", justify="center", style="red")

table_tn.add_column("FN", justify="center", style="red underline italic")

table_tn.add_column("sum/+/-", justify="right", style="cyan")

table_tn.add_row(

f"[bold green]{str(tp)}[/bold green]",

f"[bold green]{str(tn)}[/bold green]",

f"[bold red]{str(fp)}[/bold red]",

f"[bold red]{str(fn)}[/bold red]",

f"[bold cyan]{str(total)}[/bold cyan]="

+ f"[bold green]{str(total_positive)}[/bold green]+"

+ f"[bold red]{str(total_negative)}[/bold red]",

)

table = Table()

table.add_column("", justify="right", style="cyan", no_wrap=True)

table.add_column("Formula", justify="center", style="magenta")

table.add_column("=>", justify="center", style="magenta")

table.add_column("Value", justify="center", style="magenta")

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green]",

f"[bold yellow]{str(tp)}[/bold yellow]",

"",

)

table.add_row(

"Pre",

"_" * (len(str(tp)) + len(str(fp)) + 6),

"___",

f"P=[bold yellow]{precision}[/bold yellow]",

)

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green] + FP=[bold red underline italic]{str(fp)}[/bold red underline italic]",

f"[bold yellow]{str((tp + fp))}[/bold yellow]",

"",

)

table.add_section()

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green]",

f"[bold yellow]{str(tp)}[/bold yellow]",

"",

)

table.add_row("Rec", "______________", "", f"R=[bold yellow]{recall}[/bold yellow]")

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green] + FN=[bold red underline italic]{str(fn)}[/bold red underline italic]",

f"[bold yellow]{str((tp + fn))}[/bold yellow]",

"",

)

table.add_section()

# Accuracy

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green] + TN=[bold green underline italic]{str(tn)}[/bold green underline italic]",

f"[bold yellow]{str((tp + tn))}[/bold yellow]",

"",

)

table.add_row(

"Acc",

"______________________________",

"",

f"A=[bold yellow]{accuracy}[/bold yellow]",

)

table.add_row(

"",

f"TP=[bold green]{str(tp)}[/bold green] + TN=[bold green underline italic]{str(tn)}[/bold green underline italic] + FP=[bold red]{str(fp)}[/bold red] + FN=[bold red underline italic]{str(fn)}[/bold red underline italic]",

f"[bold yellow]{str((tp + tn + fp + fn))}[/bold yellow]",

"",

)

print(table)

print(table_tn)

def pretty_scores(scores: dict[str, int | float | str | None], draw=True):

tp, tn, fp, fn = scores["TP"], scores["TN"], scores["FP"], scores["FN"]

precision, recall, f1_score = scores["P "], scores["R "], scores["F "]

accuracy = scores["A "]

# print( f"| TP: {scores['TP']}, TN: {scores['TN']}, FP: {scores['FP']}, FN: {scores['FN']} | ")

# print(

# f"| Precision: {scores['P ']}, Recall: {scores['R ']}, F1 Score: {scores['F ']} |"

# )

print(

f"Precision: [bold cyan]P[/bold cyan] = [bold green]{tp}[/bold green] / ([bold green]{tp}[/bold green] + [bold red]{fp}[/bold red]) = [bold yellow]{precision}[/bold yellow]"

)

print(

f"Recall: [bold cyan]R[/bold cyan] = [bold green]{tp}[/bold green] / ([bold green]{tp}[/bold green] + [bold red]{fn}[/bold red]) = [bold yellow]{recall}[/bold yellow]"

)

print(

f"F1 Score: [bold cyan]F1[/bold cyan] = 2 * ([bold yellow]{precision}[/bold yellow] * [bold yellow]{recall}[/bold yellow]) / ([bold yellow]{precision}[/bold yellow] + [bold yellow]{recall}[/bold yellow]) = [bold magenta]{f1_score}[/bold magenta]"

)

print(

f"Accuracy: [bold cyan]A[/bold cyan] = ([bold green]{tp}[/bold green] + [bold green]{tn}[/bold green]) / ([bold green]{tp}[/bold green] + [bold green]{tn}[/bold green] + [bold red]{fp}[/bold red] + [bold red]{fn}[/bold red]) = [bold yellow]{scores['A ']}[/bold yellow]"

)

# use_layout(tp, tn, fp, fn, precision, recall)

use_table(tp, tn, fp, fn, precision, recall, accuracy)

def main():

args = parse_args()

# Example usage

# tp = 100

# tn = 50

# fp = 10

# fn = 5

tp = args.tp

tn = args.tn

fp = args.fp

fn = args.fn

scores = get_scores(tp, tn, fp, fn)

pretty_scores(scores)

def parse_args():

parser = argparse.ArgumentParser(

description="Calculate precision, recall, and F1 score."

)

# use positional arguments for tp, tn, fp, fn

parser.add_argument("tp", type=int, help="True Positives", default=0)

parser.add_argument("tn", type=int, help="True Negatives", default=0)

parser.add_argument("fp", type=int, help="False Positives", default=0)

parser.add_argument("fn", type=int, help="False Negatives", default=0)

# parser.add_argument("--tp", type=int, required=True, help="True Positives")

# parser.add_argument("--tn", type=int, required=True, help="True Negatives")

# parser.add_argument("--fp", type=int, required=True, help="False Positives")

# parser.add_argument("--fn", type=int, required=True, help="False Negatives")

return parser.parse_args()

if __name__ == "__main__":

main()

Nel mezzo del deserto posso dire tutto quello che voglio.

comments powered by Disqus